Catalog Integration Snowflake

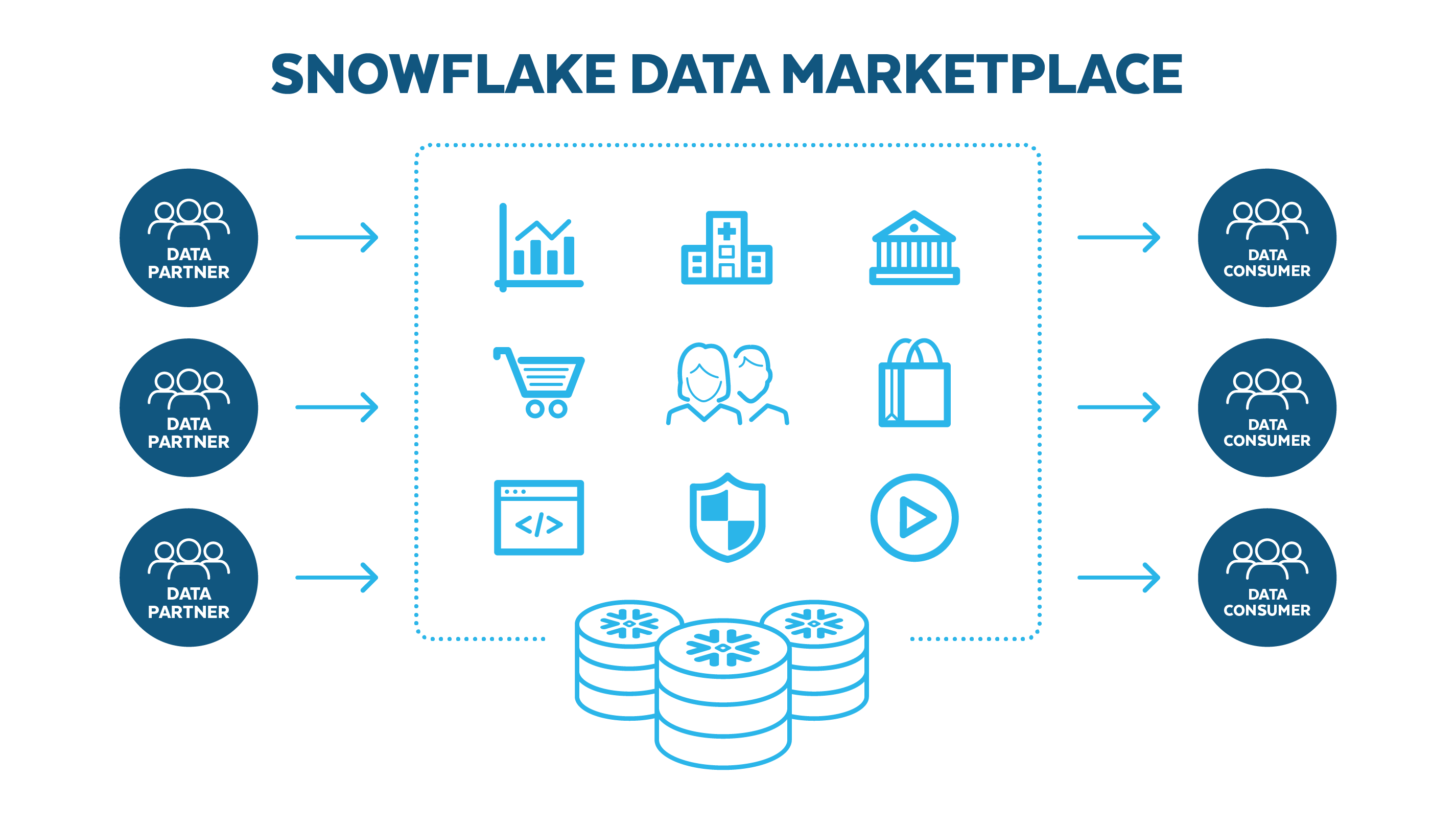

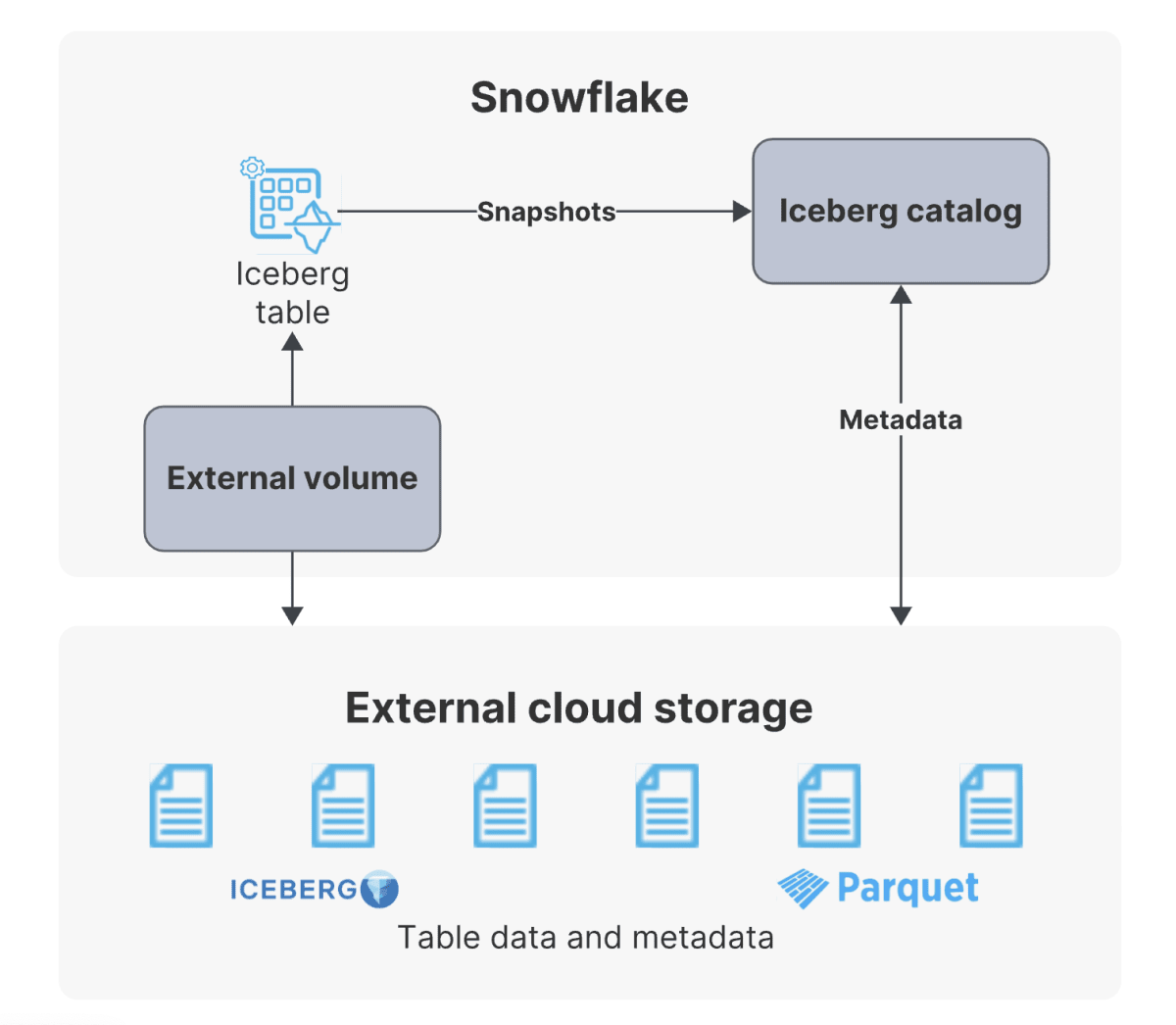

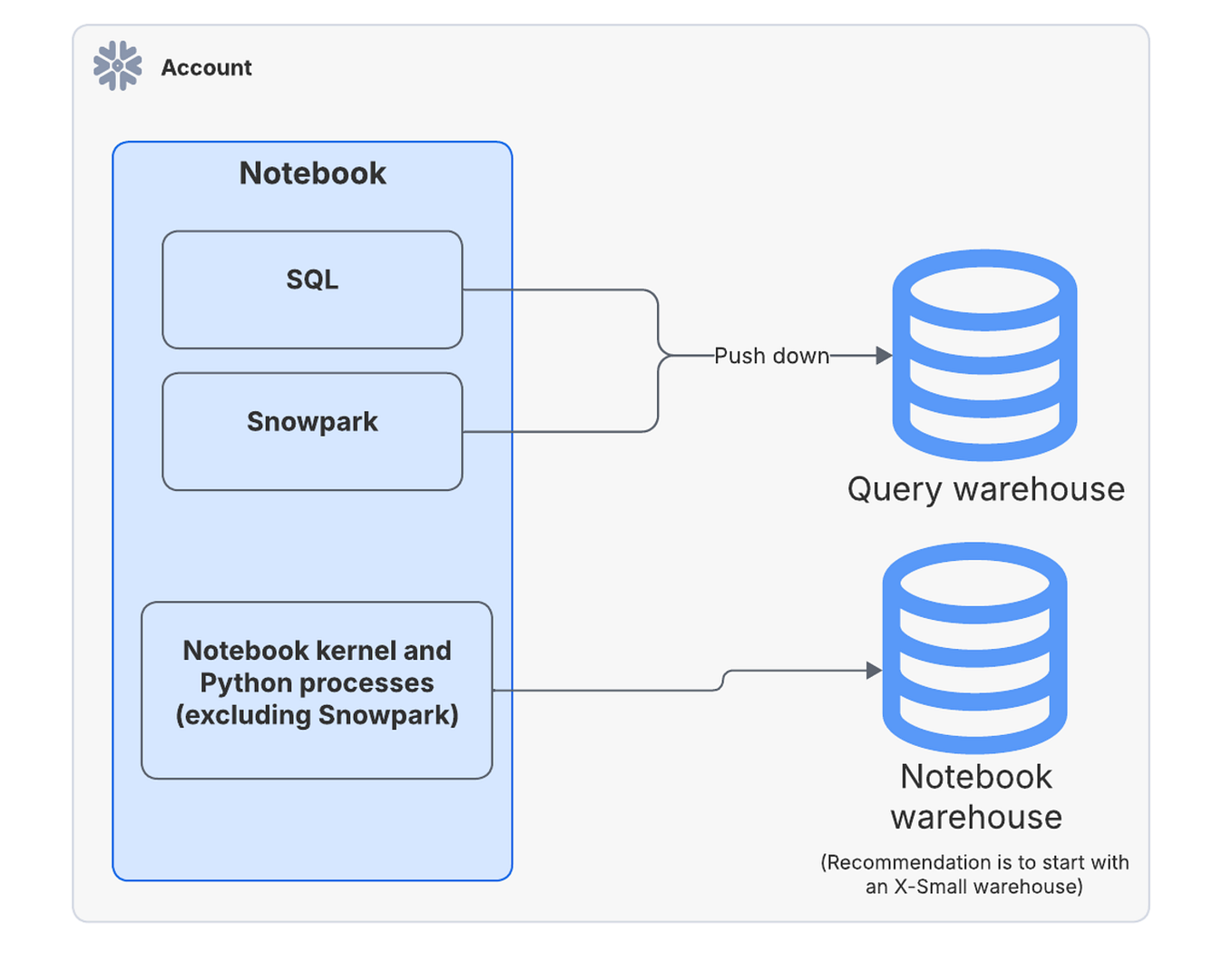

Catalog Integration Snowflake - To connect to your snowflake database using lakehouse federation, you must create the following in your databricks unity catalog metastore: The syntax of the command depends on the type of external. String that specifies the identifier (name) for the catalog integration; Create catalog integration (apache iceberg™ rest)¶ creates a new catalog integration in the account or replaces an existing catalog integration for apache iceberg™. Creates a new catalog integration for apache iceberg™ tables in the account or replaces an existing catalog integration. Snowflake open catalog is a catalog implementation for apache iceberg™ tables and is built on the open source apache iceberg™ rest protocol. Create catalog integration¶ creates a new catalog integration for apache iceberg™ tables in the account or replaces an existing catalog integration. Creates a new catalog integration for apache iceberg™ tables that integrate with snowflake open catalog in the account or replaces an existing catalog integration. The syntax of the command depends on. A single catalog integration can support one or more. とーかみです。 snowflake の iceberg テーブルはテーブルメタデータの自動リフレッシュ機能があります。 カタログ統合を glue data catalog に対して設定し、メタデータの. A single catalog integration can support one or more. Creates a new catalog integration for apache iceberg™ tables in the account or replaces an existing catalog integration. The syntax of the command depends on the type of external. When to implement clustering keys. Snowflake tables with extensive amount of data where. Create an r2 bucket and enable the data catalog. Snowflake open catalog is a catalog implementation for apache iceberg™ tables and is built on the open source apache iceberg™ rest protocol. Snowflake open catalog is a managed. A snowflake ↗ account with the necessary privileges to. There are 3 steps to creating a rest catalog integration in snowflake: Create catalog integration¶ creates a new catalog integration for apache iceberg™ tables in the account or replaces an existing catalog integration. A snowflake ↗ account with the necessary privileges to. The output returns integration metadata and properties. String that specifies the identifier (name) for the catalog integration; The syntax of the command depends on. Snowflake tables with extensive amount of data where. When to implement clustering keys. In addition to sql, you can also use other interfaces, such as snowflake rest. Snowflake open catalog is a catalog implementation for apache iceberg™ tables and is built on the open source apache iceberg™ rest protocol. Creates a new catalog integration in the account or replaces an existing catalog integration for the following sources: There are 3 steps to creating a rest catalog integration in snowflake: Snowflake tables with extensive amount of data where. Create an r2 api token with both r2 and data catalog permissions. Lists the catalog integrations in your account. Creates a new catalog integration for apache iceberg™ tables that integrate with snowflake open catalog in the account or replaces an existing catalog integration. The syntax of the command depends on the type of external. Snowflake open catalog is a managed. In addition to sql, you can also use other interfaces, such as snowflake rest. String that specifies the identifier. Apache iceberg™ metadata files delta table files There are 3 steps to creating a rest catalog integration in snowflake: When to implement clustering keys. To connect to your snowflake database using lakehouse federation, you must create the following in your databricks unity catalog metastore: Create catalog integration¶ creates a new catalog integration for apache iceberg™ tables in the account or. Snowflake open catalog is a managed. とーかみです。 snowflake の iceberg テーブルはテーブルメタデータの自動リフレッシュ機能があります。 カタログ統合を glue data catalog に対して設定し、メタデータの. Lists the catalog integrations in your account. There are 3 steps to creating a rest catalog integration in snowflake: Creates a new catalog integration in the account or replaces an existing catalog integration for the following sources: Lists the catalog integrations in your account. The syntax of the command depends on the type of external. A single catalog integration can support one or more. Apache iceberg™ metadata files delta table files The output returns integration metadata and properties. The output returns integration metadata and properties. Clustering keys are most beneficial in scenarios involving: In addition to sql, you can also use other interfaces, such as snowflake rest. The syntax of the command depends on. Creates a new catalog integration for apache iceberg™ tables in the account or replaces an existing catalog integration. The output returns integration metadata and properties. Create an r2 bucket and enable the data catalog. You can also use this. Snowflake open catalog is a catalog implementation for apache iceberg™ tables and is built on the open source apache iceberg™ rest protocol. Create an r2 api token with both r2 and data catalog permissions. Snowflake open catalog is a managed. In addition to sql, you can also use other interfaces, such as snowflake rest. Create catalog integration¶ creates a new catalog integration for apache iceberg™ tables in the account or replaces an existing catalog integration. Lists the catalog integrations in your account. To connect to your snowflake database using lakehouse federation, you must create. The syntax of the command depends on. Create catalog integration (apache iceberg™ rest)¶ creates a new catalog integration in the account or replaces an existing catalog integration for apache iceberg™. Creates a new catalog integration in the account or replaces an existing catalog integration for the following sources: A snowflake ↗ account with the necessary privileges to. Create catalog integration¶ creates a new catalog integration for apache iceberg™ tables in the account or replaces an existing catalog integration. Successfully accepted the request, but it is not completed yet. Creates a new catalog integration in the account or replaces an existing catalog integration for the following sources: A single catalog integration can support one or more. Snowflake open catalog is a managed. When to implement clustering keys. You can also use this. To connect to your snowflake database using lakehouse federation, you must create the following in your databricks unity catalog metastore: Snowflake open catalog is a catalog implementation for apache iceberg™ tables and is built on the open source apache iceberg™ rest protocol. Query parameter to filter the command output by resource name. The output returns integration metadata and properties. Create an r2 bucket and enable the data catalog.What you Need to Understand about Snowflake Data Catalog Datameer

AWS Lambda and Snowflake integration + automation using Snowpipe ETL

Snowflake Releases Polaris Catalog Transforming Data Interoperability

Snowflake新機能: Iceberg Table と Polaris Catalog の仕組み

Integrating Salesforce Data with Snowflake Using Tableau CRM Sync Out

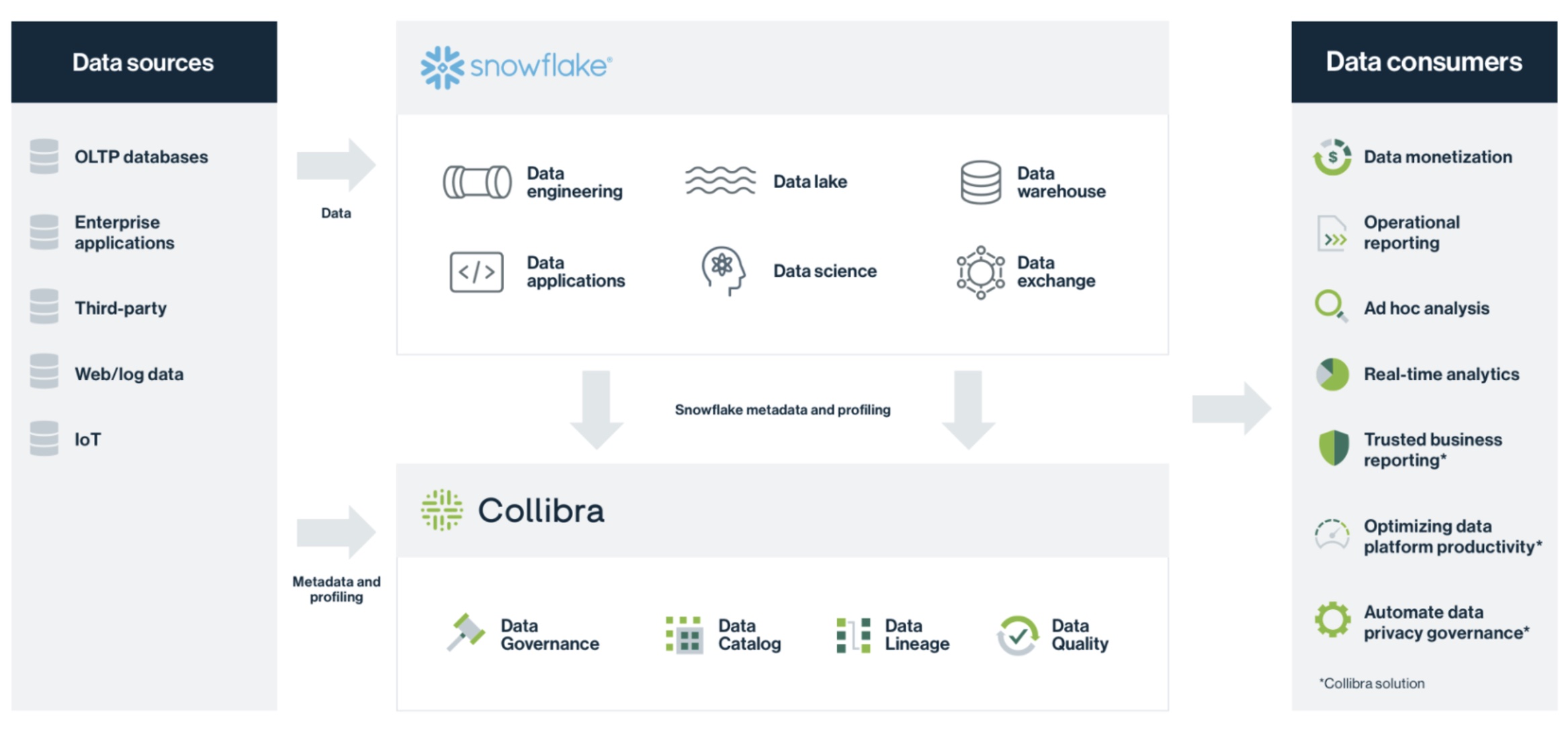

Collibra Data Governance with Snowflake

Snowflake Catalog Integration API Documentation Postman API Network

How to automate externally managed Iceberg Tables with the Snowflake

Simplify Snowflake data loading and processing with AWS Glue DataBrew 1

How to integrate Databricks with Snowflakemanaged Iceberg Tables by

The Syntax Of The Command Depends On The Type Of External.

Clustering Keys Are Most Beneficial In Scenarios Involving:

String That Specifies The Identifier (Name) For The Catalog Integration;

とーかみです。 Snowflake の Iceberg テーブルはテーブルメタデータの自動リフレッシュ機能があります。 カタログ統合を Glue Data Catalog に対して設定し、メタデータの.

Related Post: